Our planet is bathed in a constant flow of energy from the sun and the rest of the universe, which makes life on Earth possible. Sunlight is a type of radiation produced by nuclear reactions in the sun’s core: it’s here, in this phenomenal Vulcan’s forge, that particles of light released from the Sun called photons begin their long journey to the Earth. Travelling at about 300.000 km/s, they cover it in about 8 minutes, a finger snap considering the distance that separates us from our brightest star. Before reaching our comfortable chaise longue on a beach in Santorini, photons will probably have spent hundreds of thousands of years escaping the incandescent Sun’s core[1].

Light and color

Visible light- the tiny portion of the electromagnetic spectrum that the human eye can perceive- occurs between 400 and 700 nm[2] (Fig.1).

Figure 1. The portion of the electromagnetic spectrum that elicits a response from our visual system is narrow, only about 300 nm. It spans colours from violet (with the shortest wavelength) to red (the longest wavelength that our eyes can detect). The relationship between wavelength (λ) and the energy (E) of a photon is governed by the equation E=hc/λ, where h is Planck’s constant and c is the speed of light. The entire range of electromagnetic radiation includes radio waves, microwaves, infrared, ultraviolet, X-rays and gamma rays.

The sensory tool-kit

Life forms possess a suite of sensory receptors that enable them to interrogate the world in which they live and, often, their internal milieu at any given time. These sensors are responsive to stimuli of varied nature and operate over different stimulus magnitudes and timescales. Some of them are phasic- that is, they have pronounced on/off effects and only respond to the rate of change of the stimulus’s size, transmitting information only during its onset and offset. Other detectors are tonic and remain active while a given stimulus is continuously present, even if its amplitude remains constant over time. Combining these properties makes sensory receptors a formidable evolutionary toolkit to cope with ever-changing fluctuations in internal and external conditions. Some of these sensors respond to changes in mechanical energy (e.g., touch, vibration, and pressure); others are sensitive to nonmechanical stimuli (e.g., chemicals, light, and temperature). Unlike visible light wavelength or sound frequencies, which are quantitative variables that can adopt an infinite number of possible values within a given range, chemical senses do not have continuous modalities but rather form stimulus classes (e.g., sour, sweet). In addition, many species, from bacteria and honeybees to butterflies and fish, as well as bats and birds, are equipped to detect magnetic fields and use this information for navigation.[3]

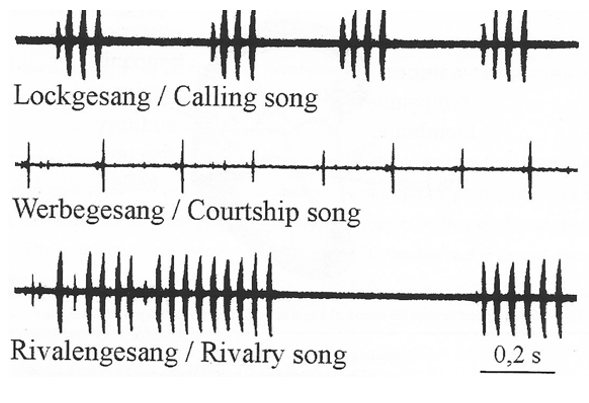

Humans and other animals also may exchange signals across different modalities to communicate. If the sender and receiver belong to the same species, this is known as intraspecific or conspecific communication; if they belong to different species, then we talk about interspecific communication. For many species across the animal kingdom, these sensory messages support a rich behavioural repertoire. For this reason, being able to send and decode these signals unambiguously can make a crucial difference between finding a suitable mating partner or, instead, bumping into a rival conspecific (Fig. 2, adapted from Huber, 2000)[4].

Fig. 2. Sections of the song of the species of cricket Gryllus bimaculatus.

In some cases, conspecific communication can be learned; this is true of songbirds and of our own language. In other cases, however, it is phylogenetically programmed, as in insect acoustic communication. Furthermore, stereotyped traits, like the courtship call illustrated above, are invariant because they are subject to sexual selection and are optimally tuned to meet specific behavioural requirements. To conclude, sensory receptors represent sophisticated adaptations that animals have evolved to accommodate selective factors that ultimately increased the fitness of individuals.

Vision

Vision extracts information from photons: without light, it cannot occur. Whenever there is ambient illumination, vision can operate passively, that is, without requiring either the sender or the receiver to actively emit light. However, light in the ocean decreases with depth, with minimal light penetrating between 200-1000 meters and eternal darkness reigning below 1000 meters: the only light available at these depths must be generated by bioluminescent organisms, where light production results from a chemical reaction[5]. Bioluminescence is energetically costly, but it helps deep-sea creatures to feed, defend themselves and look for a partner. In biomedical research, scientists have engineered the properties of some bioluminescent molecules to create assays that enable the localisation of specific cell populations and proteins within tissues. In another unexpected technological application, Japanese officers during the Second World War used the powder obtained from crushing the shells of bioluminescent crustaceans (freshwater ostracods) to read their documents without revealing their position to the enemy. In addition to using light, some fish living in murky aquatic environments can generate weak electrical signals or detect pressure waves in the water to communicate and orient themselves. Although these sensory organs operate at close range, one advantage is that they retain signal directionality, enabling these animals to track a signal back to its source.[6]

The ability to respond to light is common to many forms of life. Of around thirty-four animal phyla, around one-third lack a specialised organ for detecting light, another third possess some light-sensing organ, and the remainder have eyes[7]. To support behaviour, many organisms have evolved various solutions to detect photons. In most cases, these strategies are exquisitely tuned to meet specific eco-physical demands shaped by evolutionary constraints and selective forces. For example, the spectral properties of wing colouration in butterflies of the Pieridae family are tuned to the sensitivities of conspecifics’ photoreceptors, thus supporting intersexual recognition and facilitating display and concealment[8]. Bacteria use phototaxis to move towards or away from light stimuli. Some unicellular photosynthetic organisms, such as Euglena,sense changes in light intensity and direction and use this information to find the optimal environment for photosynthesis. Honeybees respond to light cues to find food, avoid predators, and detect mates and rivals.

A sensory organ like the vertebrate eye is nothing more than an image-forming device consisting of an array of sensors. In its simplest form, the first eye prototype was the eyespot, composed of a small number of photoreceptors (i.e., cells containing light-sensitive molecules) and pigment cells. Although the evolutionarily oldest eyespots would distinguish light from dark, they would not suffice to code complex light patterns.

In a further stage of evolution, invagination of these eyespots into a pit might have conferred them the ability to sense the direction of incident light. Such a tool shadows some receptors from light in one direction, and others from a different direction. A similar mechanism is used by some invertebrate larvae, contributing to the vertical migration of marine zooplankton, which is thought to represent the largest biomass transport on Earth[9]. Illuminating one of the two eyespots in these larvae alters the beating of nearby cilia, thereby locally reducing water flow and directing their swimming trajectory toward the light. This simple solution, by which light sensing is directly coupled to motility control, is crucial to support life as we know it.[10]

Primitive eyespots, such as the light-detecting organs present in some green algae and unicellular photosynthetic organisms, allow them to sense light direction and intensity, but image-forming eyes are more sophisticated tools that provide complex information about light wavelength, contrast, and polarisation (we will soon learn what these terms mean).

Although eyespots endowed animals with directional sensitivity, they did not support spatial vision, which requires the simultaneous comparison of light from different directions[11]. The addition of an optical system for collecting and focusing light probably equipped eyes to produce images, which turned out to be a tremendously successful solution: image-forming eyes appeared in six of the thirty-four extant metazoan phyla, which together account for about 96% of known species living today[12].

Structure of the human eye

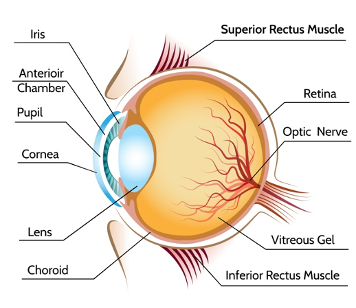

Light passes through an opening in the front of the eye called the pupil, which regulates the amount of light arriving at the lens. The latter then focuses light onto the retina at the back of the eye. The lens is attached to the ciliary muscles, which control accommodation, allowing the eye to focus on objects at different distances. To focus on distant objects, these muscles relax, resulting in a flat lens; when they contract, the lens becomes rounder, enabling focusing on nearby objects (Fig. 3).

Figure 3. The structure of the human eye (iStock).

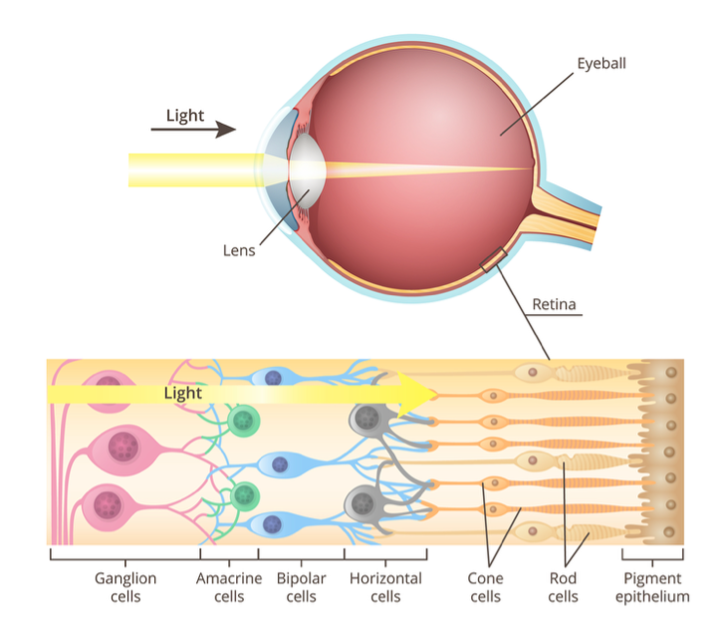

The retina is a sheet of light-sensitive neural tissue that covers the back of the eyeball; it’s here that the initial processing of visual information occurs. It presents a stratified structure comprising layers of sensory neurons interconnected by synapses, organised as discrete bands (Fig.4). There are only five main classes of neurons: farthest from the lens lie the photoreceptors, which comprise the rods, essential for night vision, and cones, which operate during daylight when we can perceive colour. Both types of photoreceptors contain light-absorbing chemicals. When photoreceptors are excited by photons, they generate an electrical signal. Cones are connected to bipolar cells, which synapse with ganglion cells, whose axons leave the eye to form the optic nerve, which conveys visual information to the brain. The small area through which ganglion cell axons exit the retina is a blind spot: we cannot see with it because it’s simply devoid of photoreceptors. The more sensitive rods connect to another cell type, the amacrine cell, which links to the cone circuitry. In addition, other cell types- amacrine and horizontal cells- make predominantly lateral connections. In terms of signalling, ganglion cells are unique because they are the only ones that can generate stereotyped, all-or-none voltage changes across their plasma membrane called action potentials. All other cell types exhibit graded changes in voltage, known as the receptor potential. It might seem odd that light has to propagate through the fluid in the centre of the eye and pass through three cell layers and their synaptic contacts to reach the sensor, but this works well because these layers are mostly transparent. However, for maximal sharpness in the centre of view, at that very point called the fovea, all cells, bar photoreceptors, are displaced to the side to minimise interference with the optical path.

Figure 4. Structure of the retina (iStock)

We mentioned earlier that we are endowed with photoreceptors, which come in two classes that differ in sensitivity: cones are used for daytime vision and are less sensitive than rods, which support night vision. These photoreceptors are not evenly distributed across the retina: cones are more abundant at the fovea, a small area in the retina responsible for sharp vision, and rods predominate in the retinal periphery. Most people have three different kinds of cones, each containing a different type of pigment that confers sensitivity to light across different parts of the visible spectrum.

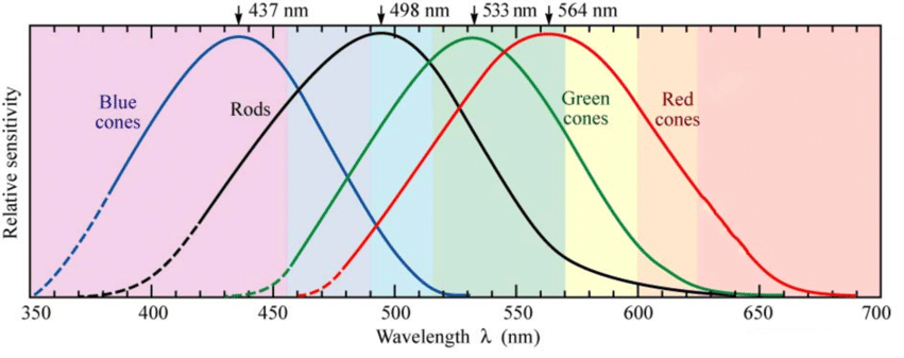

The three cone types are usually referred to as long-, middle-, and short-wave receptors, or L, M, and S for the wavelengths of the spectrum to which they are most sensitive, with peak sensitivities in the yellowish-red, green, and violet-blue regions of the spectrum. Have you heard about the RGB system before? Red, green and blue are the primary colours of light and when mixed in different proportions, millions of colours can be generated. If we look at a high-magnification view of an LCD display, we can detect individual pixels, with each pixel consisting of three smaller dots that emit red, green, and blue light, as shown below (Fig. 5).

![]()

Figure 5. This is a photomacrograph image of a MacBook Pro 15’’ display from 2011. In this example, the side of a pixel is 0.231 mm (white bar). From: Mitjà, C., Escofet, J., “LCD displays performance comparison by MTF measurement using the white noise stimulus method,” Proceedings of SPIE Vol. 7867, 78670I (2011).

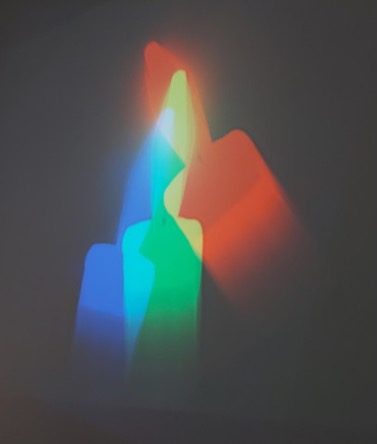

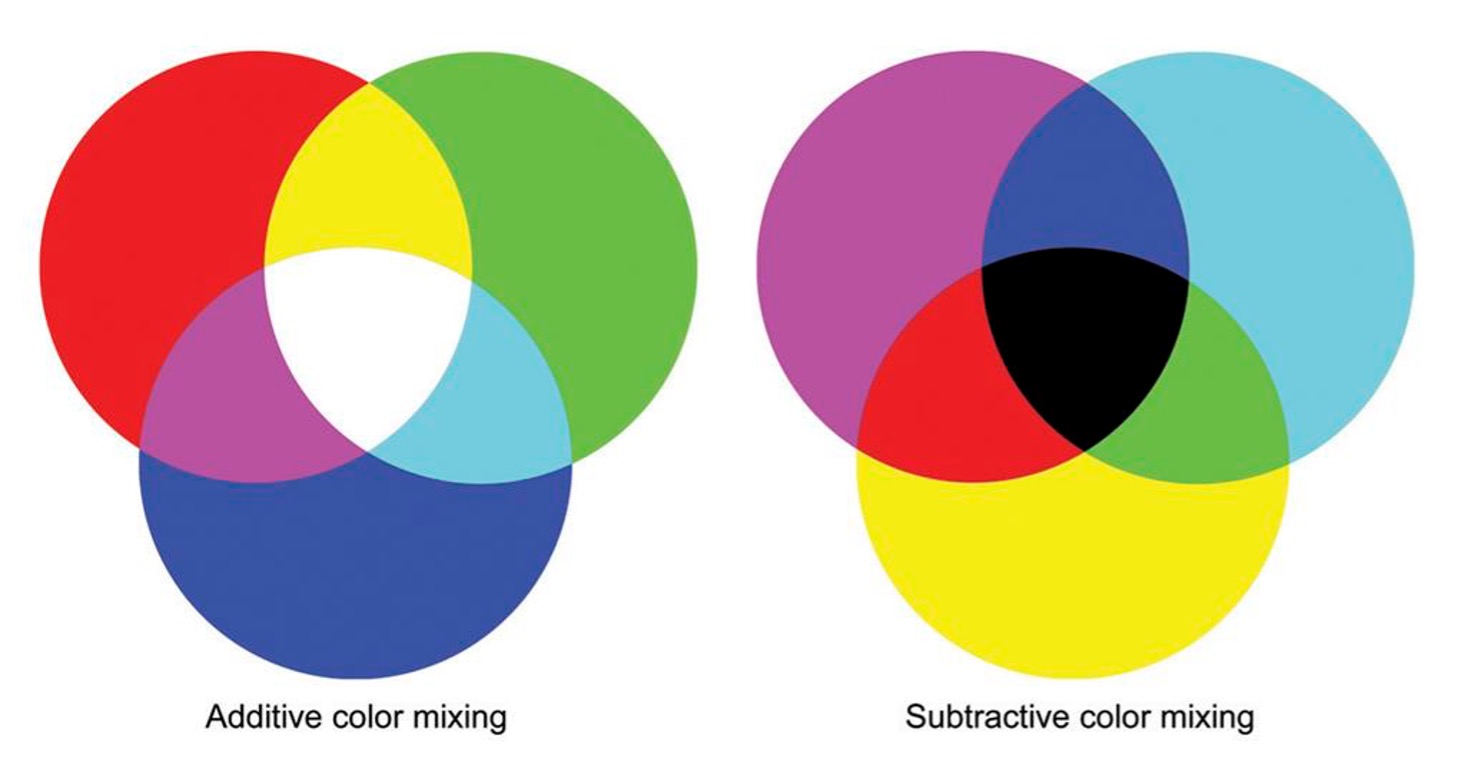

When lights of different colours are mixed together, we talk of additive mixing because all mixed wavelengths reach our eyes. The primary colours of additive colour mixing are red, green and blue, as mentioned before. If we mix red, green, and blue light in equal proportions, we will see white light. To illustrate this point, consider Nam June Paik’s video installation “One Candle” (1989), in which the flickering flame of a single candle is filmed in real time by CRT video projectors that use small, high-brightness cathode-ray tubes of the three primary colours as the image-generating element. Paik split the live image among the three RGB projectors, avoiding a full merge of the images, and therefore filled the room with partially overlapping candles in different colour mixtures (Fig. 6).

Remember that we cannot see light beams travelling through the air; rather, we see light reflecting off or refracting off objects.

Figure 6. One Candle, Nam June Paik (1989)

What happens when we mix paints of different colours? Pigments absorb certain colours and reflect others, and each time another colour of paint is thrown in the mix, there are more colours absorbed, and fewer are reflected. Subtractive colour mixing underlies the choice of dyes for a printer cartridge, which includes yellow, magenta and cyan. Mixing all of these colours together will absorb all the light, and in the end, we will only see black because no light will be reflected back to our eyes (Fig. 7).

Figure 7. Additive (light) and subtractive (pigment) colour mixing.

The response of each of these receptors depends on both the wavelength and the intensity of light. If you look at their sensitivity plots, you will soon notice that there is ambiguity in the coding of signals because the activation of the red channel at any given wavelength could be equated to a similar photoreceptor response in the yellow-orange part of the spectrum (Fig. 8).

Figure 8. Spectral sensitivity of cone cells in the human eye (adapted from Dowling, 1987). In black, the spectral sensitivity of rods.

For example, if a single long-wave photoreceptor gives a particular response to a given amount of light (for example, 1000 photons at 640 nm), it would give twice as large a response to twice as bright a light intensity (2000 photons at the same wavelength). However, that magnitude response can be elicited by the stimulation of 1000 photons at 620 nm, which would yield orange light. This means that the magnitude of the response alone cannot, on its own, be used to identify the colour of light that activated a given receptor type. We would be able to distinguish a mixture of red and green light from pure yellow if we had separate cone photoreceptors peaking in the red, yellow, and green regions, but that is not the case. For our cones, red and green light mixed together look exactly the same as pure yellow light. For this reason, given the broad spectral sensitivity of each receptor and the coding ambiguity, the visual system compares the activation of each cone type with that of the other cone types. So, red light will elicit a higher response from red cones than from green cones. Even if the amount of red light increases and both receptors increase their responses, it will still result in greater activation of red cones than of green cones. Thus, the ratio between red and green cone responses gives an unequivocal readout for light of yellow-to-red colour. Why do we need the blue channel then? This is simply because our cone photoreceptors do not span the entire spectrum; if that were the case, our visual system could take the ratio of their response signals and obtain a unique value across the entire spectrum. Adding a third channel -blue- is therefore required to unmistakably distinguish all spectral colours.

Luminance and night vision

Luminance is the perceived lightness or darkness of an image and is determined by the visual system’s sensitivity to a given wavelength. It allows us to perceive brightness differences across regions of a visual scene. It is not a physical measurement and should not be confused with the amount of light that gets reflected by objects (luminosity) nor with the spectral properties of that reflected light (colour). Luminance, on the other hand, depends on how sensitively the visual system responds to light of varying colour. Contrast is the difference in luminance or colour that makes an object visible against a background of different luminance or colour. In a snow scene, for example, the bright white tones make objects that aren’t covered in snow stand out in stark contrast, and this is due to the difference in luminance. The best way to understand luminance is to simply convert a colour image to grayscale. If we look at the grayscale version of Velázquez’s Meninas below, we can clearly recognise all the figures depicted in the painting, correctly grasp how they are organized in space and understand the scene depth. Getting luminance changes right is very important in everyday life because depth perception, three-dimensionality, movement, and spatial organisation are all processed by parts of our visual system that are insensitive to colour.

Figure 9: Las Meninas, as painted by Diego Velázquez (left), and displayed in grayscale (right).

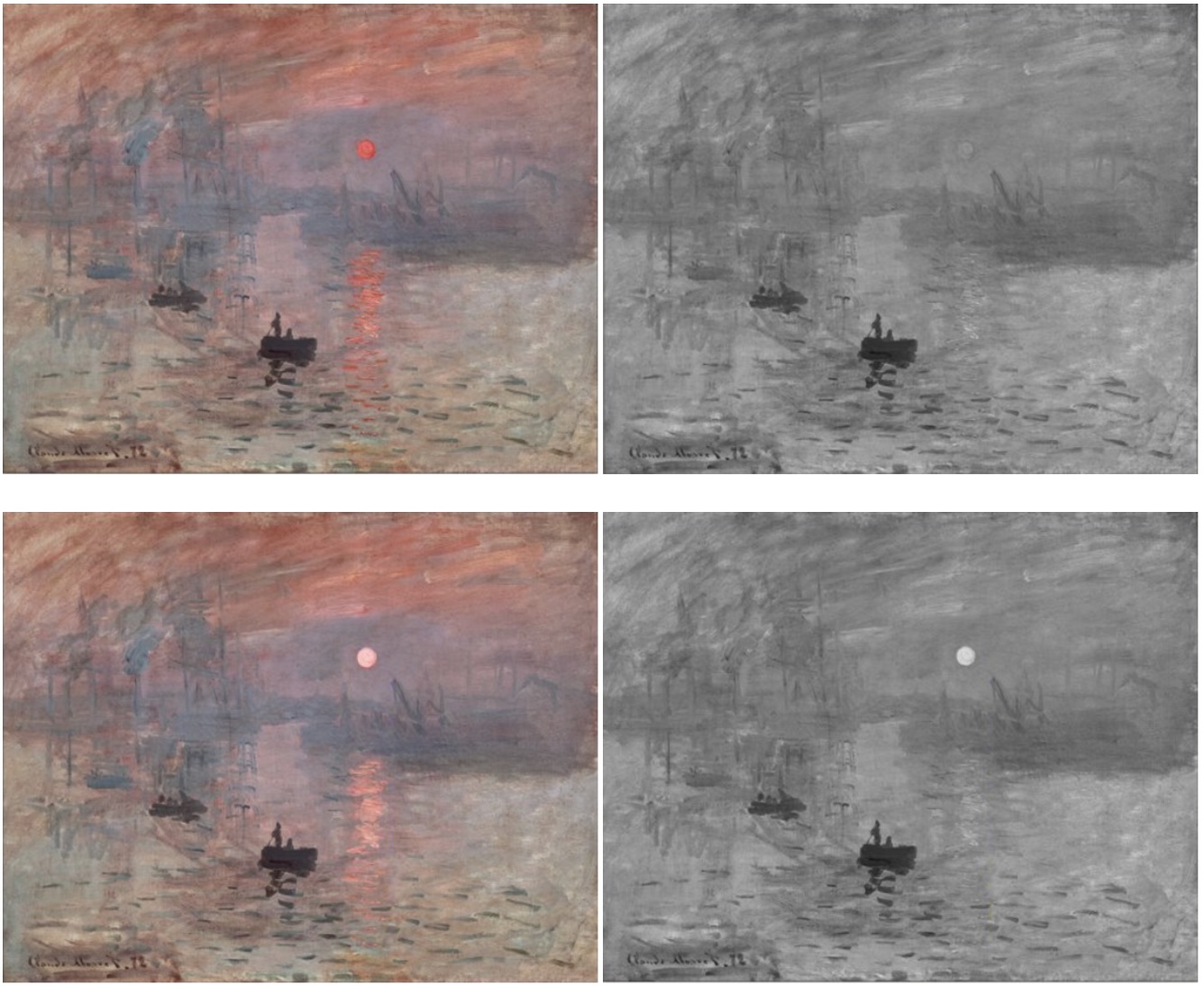

Artists can tweak luminance to achieve special effects, as illustrated here by Claude Monet’s painting, analysed by Margaret Livingstone in her wonderful book “Vision and Art: the art of seeing”[13]. The industrial revolution was in full swing, and Paris had just undergone Haussmann’s architectural deep surgery when Monet painted “Impression-Soleil levant” in 1872. Its unfinished appearance, when compared with contemporary paintings that strove to reproduce reality in its smallest details, challenged audiences and critics alike. The image of a row of fuzzy boats dissolving in the haze of a busy harbour, against a background of blurred chimneys releasing plumes into the air, inaugurated a new type of art that was less concerned with faithfully reproducing reality than with conveying the subjective experience it evoked and the beholder’s inner state. The subject choice- in this case, a banal scene directly drawn from everyday life and devoid of any obvious lyricism- and its treatment, where loose brushstrokes eroded identities, resulted in a new template that helped launch Impressionism. In the original image, the sun and its reflection on the water appear much brighter than the rest of the scene, which is dominated by light blue and grey tones. We are inclined to accept Monet’s proposal because it does not contradict our expectation of the sun being by far the brightest source of light in a daylight natural scene. However, first appearances can be deceptive: the grayscale version of this picture reveals that the sun has exactly the same luminance as the grey background. Rebalancing the sun’s luminance to make it higher than the background to better approximate a real-life setting fails to achieve the same effect and removes this painting’s main appeal. Monet achieved heightened visual contrast by pairing incandescent red and cool blue, which are at opposite ends of the spectrum, while keeping the scene’s overall luminance uniform.

Figure 10. Top left: Impression, soleil levant, painted by ClaudeMonet in 1872. Top right: Although in the coloured picture the sun appears brighter than the landscape, a grayscale reproduction shows that it has the same luminance as the grey background. To our visual areas, the sun is invisible to the luminance-detecting system. Bottom left: I increased the sun’s luminance to make it look brighter. Bottom right: The grayscale version confirms that the sun is now brighter than the background. Adapted from Livingstone, Margaret. Vision and art: the biology of seeing. New York: Abrams, 2014.

Monet wisely exploited an interesting feature of our visual system: from the earliest stages of visual processing, colour and luminance are analysed by anatomically distinct brain areas. The ability to see in colour is a hallmark of primate vision; luminance detection is evolutionarily older and is present in all mammals. Livingstone argues that the flickering appearance of the sun may lie precisely in the ambiguity that the brain cannot easily resolve. On the one hand, our “luminance” detector does not distinguish between the sun and the background, but this observation is difficult to reconcile with the interpretation of the same scene obtained by the “colour” system.

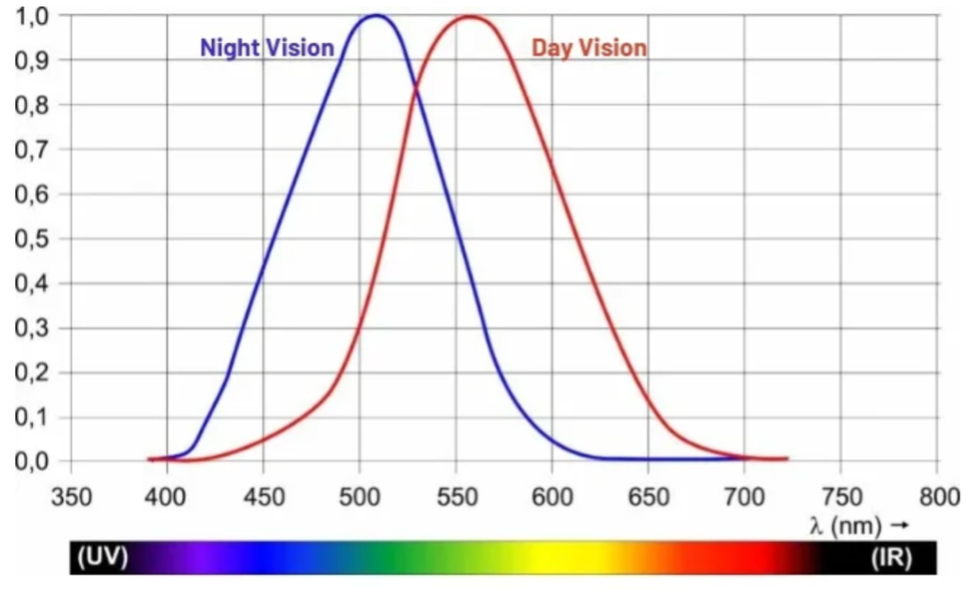

How does our perception change with varying illumination conditions? In dim light, we can barely detect colours, although we may still be able to recognise the shapes of individual objects. In fact, our night vision is colour-blind, and we map visual scenes to grayscale; that is, we primarily detect changes in luminance. As noted previously, this task relies on our rod photoreceptors, which are more sensitive than cones but are largely unresponsive to coloured stimuli. The higher the ambient light intensity, the larger the photoreceptor response. However, if we plot the combined response of all three cone photoreceptors under daylight conditions, we will see the function shown below. This implies that we are most sensitive to the green-yellow range, and we would need more blue photons than green photons to elicit an equivalent photoreceptor response.

Figure 11: Apparent brightness of different wavelengths of light (day vision).

References

[1] Mitalas, R. and Sills, K. 1992, “On the photon diffusion time scale for the sun”, The Astrophysical Journal, Vol. 401, p. 759-760.

[2] 1nm= 1 x 10-9 m

[3] Nimpf S and Keays DA, Myths in magnetosensation, iScience Volume 25, Issue 6, 104454 (2022). https://doi.org/10.1016/j.isci.2022.104454

[4] Huber F, 50 Jahre Forschung über akustische Kommunikation bei Grillen: Verhalten und Neurobiologie 1-31, Verhandlungen des Westdeutschen Entomologentags Düsseldorf, 2000

[5] Certain creatures produce light through a chemical reaction in which luciferin is oxidised by the enzyme luciferase.

[6] Pirih, P 2011, ‘Vision, pigments and structural colouration of butterflies’, Doctor of Philosophy, Groningen.

[7] Fernald R.D., Casting a Genetic Light on the Evolution of Eyes. Science 313, 1914-1918 (2006). https://doi:10.1126/science.1127889

[8] Pirih, P 2011

[9] Biomass refers to the total mass of living organisms in a given area or ecosystem, including the organic matter they produce or consume.

[10] Jékely, G., Colombelli, J., Hausen, H. et al. Mechanism of phototaxis in marine zooplankton. Nature 456, 395–399 (2008). https://doi.org/10.1038/nature07590

[11] Land, Michael F. Current Biology, Volume 15, Issue 9, R319-R323. https://doi.org/10.1016/j.cub.2005.04.041

[12] Fernald, R.D., 2006

[13] Livingstone M, Vision and art: the biology of seeing, New York, Harry Abrams, 2002, page 38-40